More Americans than ever need a license to work. But what do occupational licenses actually accomplish? This case study of one such license adds to a growing body of research that suggests this red tape does nothing but create needless barriers to work. It finds that a licensing scheme for tour guides in the District of Columbia had no effect on tour quality—and that customers rated tours just as highly after the District stopped licensing guides as they did before. These results point to a better way to ensure quality: empowering consumers to decide which service providers deserve their business. By eliminating unnecessary licensing, policymakers can put more people to work and give consumers more options—without compromising quality.

Related Cases

Economic Liberty | Occupational Licensing | Other Property Rights Abuses | Private Property

Pennsylvania Real Estate Office Requirement

Pennsylvania real estate broker Kevin Gaughen has filed a lawsuit alongside the Institute for Justice challenging a law requiring brokers in Pennsylvania to have brick-and-mortar offices.

Commercial Speech | Economic Liberty | First Amendment | Sign Codes

Nebraska Barber Shop Free Speech

Institute for Justice joins lawsuit defending family bar threatened with fines and even jail time for using “barber shop” theme to honor owners’ late father When Mike DiGiacomo and his siblings wanted to remodel…

Commercial Speech | Economic Liberty | First Amendment | Occupational Licensing

Oklahoma Caskets II

Small business challenges Oklahoma's unconstitutional restrictions on casket sales…

In The News

Liberty & Law Article

Free to Speak for a Living

Liberty & Law Article

Paws Down on Arizona’s Animal Massage Law

Liberty & Law Article

Licensing to Give Tours?

Liberty & Law Article

Charleston Shows No Southern Hospitality for Tour Guides

Liberty & Law Article

VICTORY! Tour Guides in Savannah are Finally Free to Talk

Liberty & Law Article

Tour Guides Win Major Free-Speech Fight

Related Reports

Economic Liberty | Occupational Licensing

Occupations: A Hierarchy of Regulatory Options

Momentum is growing in favor of reining in excessive occupational licensing. However, policymaking in this arena is too often plagued by assumptions that the only regulatory options are no licensing or full licensing. Such binary…

Economic Liberty | Hair Braiding | Occupational Licensing

Barriers to Braiding

African-style hair braiding is a time-tested and natural craft. Yet most states force braiders to get a government license and take hundreds or even thousands of hours of classes to work legally. This study finds…

Economic Liberty | Vending

Street Eats, Safe Eats

Boston |Las Vegas |Los Angeles |Louisville |Miami |Seattle|Washington, D.C. Introduction America loves food trucks. These new mobile vendors are creating jobs, satisfying hunger and making downtowns cool…

Executive Summary

In most of America, all a person needs to start working as a tour guide is something interesting to say. However, in several U.S. cities, aspiring tour guides must pass a test and get a government license before being allowed to work. And such licenses are just one piece of a much larger trend—today, more Americans than ever need a license to work. But what do these licenses actually accomplish?

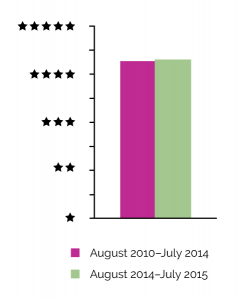

To find out, this report puts occupational licensing to the test, using the District of Columbia’s now-defunct tour guide licensing scheme as a case study. It finds that the scheme had no effect on the quality of tours in the nation’s capital: An examination of 15,000 TripAdvisor reviews reveals that consumers rated guided tours just as highly after D.C. stopped licensing guides in 2014 as they did before—despite the entry of many new and untested guides into the market. While the scheme was in force, consumers awarded D.C. tour companies an average of 4.27 out of 5 stars; after it was eliminated, the average rating was 4.3 stars.

Thus, instead of ensuring quality tours, D.C.’s licensing scheme just made it harder for some would-be guides to break into the business and kept others out altogether.

These findings point to a better way to encourage quality: consumers. Consumers—not licensing officials—kept D.C.’s tour guides on their toes while the license was in force, and they still do today. Through websites like TripAdvisor and Yelp, consumers weed out providers who fail to deliver quality.

This report adds to a growing body of research that finds many licenses do not improve services for consumers; they just shut people out. By eliminating licenses that exclude people for no public benefit, policymakers can expand economic opportunity and consumer choice—without compromising quality.

Introduction

In 2004, Tonia Edwards and Bill Main hit upon an innovative business idea: a company that would offer guided tours of different cities by Segway, the two-wheeled, self-balancing personal transportation vehicle. Tonia and Bill soon started Segs in the City, a company operating Segway tours in Annapolis, Baltimore and Washington, D.C. Their idea proved popular with tourists, but operations in the District ran into a snag. To legally share stories and descriptions of the nation’s capital, Tonia, Bill and any guides they employed needed to obtain tour guide licenses from the District’s Department of Consumer and Regulatory Affairs.

Securing each license required paying three separate fees totaling $200, submitting a time-consuming application and passing a 100-question multiple-choice examination about the District. Anyone caught describing, explaining or lecturing “concerning any place or point of interest in the District to any person” during a sightseeing trip without official permission faced fines of up to $300 or imprisonment for up to 90 days. 1

In most American cities, tour guides are free to share stories and describe sights without passing a test. For example, all Tonia and Bill needed to start their successful Maryland-based tour businesses was their idea and some Segways. Tour guides who wish to lead walking tours face almost no startup costs at all. However, a handful of cities have licensing schemes similar to what the District had. Charleston (South Carolina), 2 New Orleans, 3 New York, St. Augustine (Florida), and Williamsburg (Virginia) all force tour guides to pass a test before they can work. 4

Tour guide licensing is only one example of a broader—and growing—phenomenon of city and state governments demanding permission slips before people can work. More than 800 occupations are licensed somewhere in the United States, 5 and one in four American workers now needs a license to work. 6 In the 1950s that figure stood at about one in 20. 7 This growth is capturing widespread attention and concern. 8 In 2015, the White House released a report examining the trend and calling for reform to rein it in. 9

Concerns over rampant licensing stem in large part from the daunting hurdles it forces aspiring workers to clear: A 2012 Institute for Justice study of 102 low- and moderate-income occupations found that, on average, licensing regimes force prospective licensees to spend nine months in education or training, pay more than $200 in fees and pass one exam. 10 These hurdles create significant barriers to employment, entrepreneurship and job creation. During Segs in the City’s busy season, for example, about half its tours are led by part-time employees, mostly college students on summer break. 11 Studying for the licensing test and paying the fee required a heavy investment of time, energy and money for a job lasting only a few months.

Proponents justify licensure, and the burdens it imposes, by claiming that it weeds out substandard service providers, thereby ensuring quality. Quality assurance was, in fact, the District’s rationale for the tour guide test, the centerpiece of the licensing scheme. But can a test really ensure a high-quality tour experience? This report took advantage of a unique opportunity to find out—an opportunity that came when Tonia and Bill joined with the Institute for Justice to challenge D.C.’s tour guide test in federal court, and won.

In 2014, after nearly four years of litigation, the U.S. Court of Appeals for the D.C. Circuit struck down D.C.’s licensing scheme as a violation of the First Amendment. 12 That ruling also set the stage for this report, creating ideal conditions for an analysis comparing the quality of D.C. tour guides with and without the test. Looking at tour-goers’ satisfaction, as self-reported on TripAdvisor, this study detects no difference in tour guide quality following the end of the test and the entry of untested guides into the market. Not only that, it finds that quality was high both before and after the test ended. In other words, the test appears not to have made a difference to quality one way or the other.

These results add to a growing body of evidence suggesting that claims about licensing’s ability to ensure quality are, at best, exaggerated. Moreover, they point to alternatives to burdensome occupational licenses that empower consumers, not government bureaucrats, to decide which service providers deserve their business. These alternatives, consumer review websites and ordinary business incentives, were already at work in the D.C. tour market before the exam’s demise, and they remain at work now. They kept tour quality high when the test was in place and are still doing so without it, even as new guides are free to enter the market. These results give another reason to celebrate Tonia and Bill’s victory over the District of Columbia’s tour guide test—and have implications that reach far beyond the tour guide industry.

Occupational Licensing: A Growing Barrier to Work

Occupational licensing is a pervasive and growing reality for American workers. And much of this growth reflects an increase in the number of licensed occupations, not other factors such as the changing composition of the workforce. The White House’s 2015 report on licensing attributed almost two-thirds of the increase in the share of state-licensed workers to newly adopted licensing regimes. 13 Put simply, the primary reason that more workers need a license today is that states are licensing more jobs.

The expansion of occupational licensing means more burdens for aspiring workers, who may be required to earn a certain amount or type of education, complete specialized training or an apprenticeship, pass one or more exams, attain a certain age or grade level, pay fees and more. 14 This is often the case for occupations that are otherwise well suited to people on the first rungs of the economic ladder 15 —occupations like florist, packager, travel agent, shampooer, auctioneer and tour guide. Tests, like the one D.C. once required of tour guides, are a common barrier to occupations like these: The Institute for Justice’s 2012 study found that 79 out of the 102 low- and moderate-income jobs required at least one exam. 16

Scholarly research has shown that licensing burdens add up to fewer opportunities for workers, 17 and there is evidence that some groups are impacted more than others. People working in the low- and moderate-income occupations IJ studied in 2012 were more likely to be racial or ethnic minorities than members of the general population. They also tended to have less education. Compared with only 9.5 percent of the general population, 15.7 percent of workers in lower-income occupations had less than a high school education. 18 Therefore it is likely that barriers to entering these occupations disproportionately affect minorities and those with less education.

In addition to burdening aspiring workers, licensing imposes costs on consumers because it restricts the supply of practitioners. 19 This means fewer choices and higher prices 20 as service providers are able to command higher wages due to their relative scarcity. 21 This observation points to a possible explanation for the growth in licensing: Practitioners themselves pursue licensure of their occupations seeking to capture the economic benefits licensing confers on the licensed. And, indeed, academic research finds that occupational licensure in an industry is typically sought by insiders to that industry—existing practitioners of the occupation and their professional associations and companies. 22

Calls for licensure of an occupation are often accompanied by appeals for the need to ensure quality. 23 However, there is little research to bolster such claims. 24 The scholarly evidence, in fact, suggests that claims about the benefits of licensing to consumers in terms of higher quality are overstated. 25 The same goes for licensing tests: Academic research on occupational licensing in industries as diverse as floristry, construction contracting and education has found that testing is used by members of the occupation to restrict entry and raise wages with no positive effect on quality. 26 To take one example, a 2010 experiment put Louisiana’s florist licensing exam to the test, asking florists from licensed Louisiana and unlicensed Texas to judge floral arrangements from both states, without knowing which arrangements were from which state. The experiment essentially found no difference between the arrangements created by licensed and unlicensed florists. 27 Indeed, far from raising quality as proponents claim, some studies have shown that licensing may dampen innovation 28 and limit or even lower quality. 29

There is a large body of research on tour guides outside the U.S., some of which looks at tour guide quality. However, none explores quality from the perspective of what consumers actually value. Instead, questions of quality are mediated by experts and insiders, whose priorities may or may not align with those of consumers. And apart from this report, I know of no research at all that looked at tour guide quality in the U.S. context. This report therefore makes a novel contribution to knowledge about tour guide licensing and quality in the United States. It also adds to the evidence casting doubt on claims that licensing, in general, ensures quality.

Testing the Test: Before and After D.C.’s Tour Guide Licensing Exam

Methods

To examine whether D.C.’s tour guide examination improved quality, this report relies on TripAdvisor consumer ratings of D.C. tour companies from before and after July 2014, when the D.C. Department of Consumer and Regulatory Affairs ended the test. TripAdvisor was chosen because it is the most popular consumer review travel website, with 375 million unique monthly visitors and over 250 million reviews of more than 5.2 million businesses as of July 2015. 30 There is no comparable resource compiling reviews of individual tour guides. However, many guides are employed by tour companies, making reviews of such companies a good proxy for determining tour guide quality. If D.C.’s test successfully weeded out lower-quality guides, one would expect to see a fall in the average tour company rating following the test’s removal.

I collected data from the TripAdvisor website for all D.C. attractions labeled as “Tours & Activities” and for all reviews of these companies made between August 1, 2010, and July 31, 2015. Historically, D.C.’s tourist season has two peaks, the busiest season from mid-March through early June and then the lighter season from September through October, 31 so I collected data for a full year following the change to capture both peak seasons. In all, I examined 14,762 consumer reviews across five years. The review information included the business name and website, date of the review, reviewer’s rating, number of reviews contributed by the reviewer at the time of collection, number of helpful votes received by the reviewer at the time of collection, and text of the review.

I calculated the average consumer rating per business before and after the test—disaggregated by type of tour (bike, boat, bus, mixed, Segway and walking). These raw numbers are useful but not sufficient for comparing consumer satisfaction during the time guides were required to take the test and after the test requirement was eliminated. For example, the moment the testing requirement was removed, all of the tour guides still fell under the old regime and had taken the exam; however, over time, many new guides entered the market who had not taken the exam. Additionally, some reviewers may be more reputable, and research suggests such reviewers have a larger impact on potential consumers’ choices. 32

To control for these factors, which can cloud the comparison, and to determine whether there were differences in consumer satisfaction with and without the exam, this report relies on an interrupted time-series analysis. This analysis specifically controls for the effects of an intervention—in this case, the end of the testing requirement for tour guides—at a specific time. It can also detect differences that may be delayed for a period of time after the intervention—such as new, untested, guides entering the market—while controlling for general changes over time that would have occurred without the intervention. Further details on the sample and analysis are provided in Appendix A.

Results

Tour guide businesses in D.C. are generally well regarded by reviewers. Seventy-two percent of the nearly 15,000 reviews collected for the period August 1, 2010, through July 31, 2015, awarded businesses 5 out of 5 stars. Another 13% gave 4 stars. The average overall rating for all the businesses was 4.3 stars.

Figure 1: Average Tour Guide Business Rating During and After the Exam Requirement

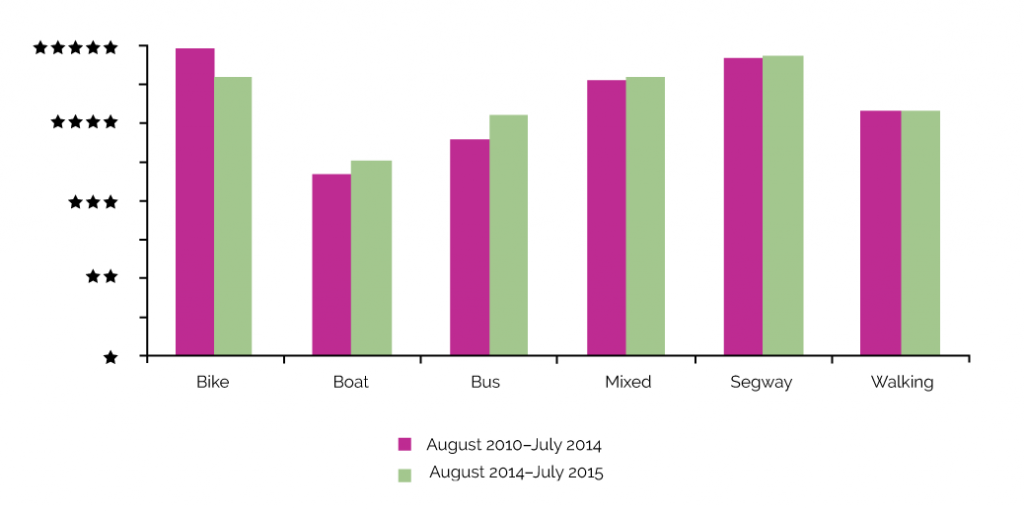

And these ratings did not change after the exam requirement was removed, although 272 new tour guides—making up 16% of registered guides—had, as of August 1, 2015, entered the D.C. market following the exam’s removal. 33 The findings show that, overall, tour businesses provided the same quality of tours after the exam was eliminated as they provided under the licensing regime—an average of 4.27 stars before and 4.3 after (see Figure 1). As Figure 2 shows, average ratings, disaggregated by tour type, shifted only slightly after the exam’s removal. For some tour types the average rating increased (boat, bus, mixed and Segway) and for bike tours it decreased. Notably, the difference in quality based on the District’s exam is small. These differences are not statistically significant for the whole sample or for any tour type, meaning they are not related to the change in licensing regime (see Appendixes).

Figure 2: Average Tour Guide Business Rating During and After the Exam Requirement by Tour Type

Discussion & Conclusion

The only major change for D.C. tour guides in July 2014 was that the testing requirement went away. Absent that requirement, new guides entered the market, many of whom had never taken the test. If the test had really worked to screen out lower-quality tour guides, as theorized by proponents, one would expect to see a drop in quality among tour businesses. Yet customers rated tour quality just as highly following the test’s demise. It could be that the District’s test was simply a bad test and that another test might have done a better job of weeding out substandard tour guides. However, this study shows that quality was high while the test was in force and remained high after the test ended.

A more likely explanation for why D.C.’s test did not affect tour quality is simply that licensing tests are a poor way of ensuring tour guide quality. And, indeed, previous research suggests there is a mismatch between one-size-fits-all approaches to quality assurance, such as testing, and the tour guide occupation. In 2014, Weiler and Black, the leading academic authorities on the subject, published a global review of the scholarly research on tour guides and determined that the “blanket approach” of licensing may not be appropriate for all guides. 34 They also noted that licensing “does not necessarily … provide an incentive for excellence” or “support advanced levels of role performance”—i.e., expressive or interactive storytelling—among tour guides. 35

But why might a one-size-fits-all approach such as testing not be the right one for tour guides? To answer this question requires an understanding of the function of testing and the nature of the tour guide occupation.

All a test can do is restrict entry to the tour guide occupation only to those who know and can recall a certain set of facts and stories under testing conditions. D.C.’s exam, for example, covered 14 categories of information from nine sources, most of them relating to traditional topics such as history and major points of interest. 36 But while historical and general interest tours are popular and common, not every guide wants to give such tours—and not every tour-goer wishes to go on them. Yet tests like D.C.’s force the guide who wants to focus on Civil War history, the guide who wants to specialize in tours of TV and film locations and the guide who wants to lead pub crawls to know the same information, much of it useless for what they want to do.

A related issue is that there are thousands of stories that guides might wish to tell about their cities, and not all of them deal with facts in the conventional sense. For example, since the 1970s, there has been an increase in the number of so-called alternative guides whose aim is to disseminate narratives counter or parallel to the “official” or “mainstream” stories of their cities. 37 Other guides may wish to focus on legends or ghost stories rather than historical facts. Tour guide tests force all guides, regardless of their unique perspectives and goals, to master information that may be irrelevant or even anathema to them—and exclude those who refuse or otherwise fail to conform.

But perhaps the biggest problem with using a test to decide who can work as a tour guide is the reality that there is more to good guiding and enjoyable tours than facts. Today’s tourists do not want to be lectured at 38 —they want to discover a place, to be immersed in the local culture and to have a unique experience, 39 whether that means watching costumed tour guides interact, shivering along with ghost stories, sampling local cuisine, or cruising around on Segways with Tonia and Bill. Consistent with these demands, research has found that tour-goers value guides’ ability to tell stories—to interpret, entertain, engage and inspire—more highly than they do guides’ knowledge about the destination or actual tour content. 40

This is not to say that factual knowledge is not important, only that there are many other qualities that people also look for in a tour guide, such as storytelling ability and charisma—qualities that a written test does not, and arguably cannot, measure. Tests like D.C.’s therefore risk shutting out gifted tour guides simply because they do not know or care to learn a particular set of facts that may not even be relevant to their tours or business models. Even for those who are willing and able to attempt them, such exams impose costs: Every hour they spend studying potentially useless or contested information is one they are not spending honing their storytelling skills, researching the topics they actually are interested in speaking about, or doing other productive things like earning money giving tours. And despite all that hard work, they may still fail because they focused on the wrong things or are poor test-takers. 41

The stories of three would-be guides who in January 2016 joined with the Institute for Justice to sue Charleston, South Carolina, over its tour guide test illustrate the human costs of licensing tests. 42 Kim Billups had everything she needed to launch her historical character tour company, right down to a replica antebellum gown—everything, that is, except a license. Mike Warfield, an insurance broker and a popular volunteer at a local museum, earned an offer to lead evening ghost and pub tours of the city. But Mike did not have a license and so could not legally accept the job. Retired book editor Michael Nolan, who moved to Charleston in July 2015 after a lifetime spent helping others tell their stories, planned to supplement his income by telling some stories of his own as a tour guide, but he could not without a license. Despite countless hours spent studying Charleston’s 490-page “training manual” for prospective guides, all three failed the city’s written test—even Mike who had passed the notoriously difficult Series 7 financial securities certification exam on his first try.

In response to the lawsuit, the city retained its 200-question written test but lowered the minimum passing score retroactively. It also eliminated the oral exam that aspiring guides had been required to take subsequent to passing the written test, 43 allowing Kim and Mike to collect their licenses in May 2016. Showcasing the arbitrary nature of tour guide exams and their passing requirements, Kim and Mike went from being potential threats to the public in the city’s eyes one day to fully sanctioned guides the next. They are free to give tours in Charleston, though only after about a year of preparation and waiting—and a constitutional lawsuit. Michael, for his part, is still waiting, unable to work as a tour guide. And they are only a few of the people who have been kept from offering tour experiences to willing customers by Charleston’s tour guide license. They—and the tour-going public—are poorer for their exclusion.

Licensing exams thus rob energetic and entrepreneurial people of the right to earn an honest living in the occupation of their choosing. They likewise rob customers of the chance to listen to, learn from and be entertained by guides shut out of the market. At the same time, they do little to ensure quality because knowing the facts they test often has little or no bearing on whether guides are good at their jobs, even if it might be useful in some cases. This certainly seems to have been the case in D.C., where the tour guide test appears to have had no impact on tour quality.

Furthermore, since quality was good both while the testing requirement was in force and after it ended and new, untested guides entered the market, something else must have been working to weed out poor guides. The findings here suggest that something, or rather someone, was consumers. Consumers will not recommend a tour they did not enjoy. In fact, they are likely to do the opposite, telling their friends, family and, nowadays, anyone with an internet connection about their negative experience through consumer review websites and social media. Meanwhile, positive word of mouth will drive consumers to tour providers with a reputation for quality. Poor tour guides and companies will be forced to improve or continue to lose market share and, before long, go out of business. With their dollars, their feet and their opinions, consumers provide feedback and make plain their expectations, which they can now share far beyond their personal social circles thanks to online platforms like TripAdvisor. And businesses, most of which are in the business of staying in business, respond.

Consumer reviews thus work in harmony with ordinary business incentives to keep quality up to par. Written for consumers by consumers, they are more attuned than any test or license to what consumers actually care about. Consumer reviews tell readers, often in quite granular detail, what a tour was like, whether it was a good value and other information more relevant to their experience than whether their guide passed a test or had a license. This information is helpful both to other consumers deciding which businesses deserve their patronage and to businesses trying to learn what consumers want and how to better serve them.

In short, any review—good, bad or indifferent—is information that can help businesses learn how to become more competitive. In this way, reviews serve both to ensure quality and to drive creativity and innovation. The following 4-star review of a D.C. tour company submitted to TripAdvisor while the test was still in force is a good example of how this can work:

I thought this tour was a fairly inclusive tour of the Washington monuments, although I would have liked to visit the Vietnam Veteran’s Memorial. However, I understand that there isn’t enough time in 3.5 hours to visit every memorial in the city (especially since some of them are actually outside of the city and in Arlington, VA!).

Having said that, I still felt very rushed at each monument and just didn’t have enough time to really see everything I wanted to see at each one. Our tour guide, Becca, was very knowledgeable and nice, however, I do think she should wait in front of the bus for everyone to disembark, so that those sitting at the back of the bus are not having to run to catch up. There were many times that I missed some of what she was saying because I had been sitting toward the back and it took a while to get off of the bus and catch up with her.

Overall, though, I thought it was a good overview of most of the main monuments. I just needed more time in my visit to go back to the ones I felt I didn’t have enough time at! 44

From this one review, consumers and the tour operator being reviewed could derive a wealth of useful information. Consumers interested in seeing most of the major Washington monuments learn that this tour could be a fine option, while consumers adamant about visiting the Vietnam Veterans Memorial Wall during their stay in D.C. learn that doing so will require a separate outing. The tour operator learns that it is, at least in one consumer’s view, doing a good job overall but should, among other things, consider giving people more time at the various attractions.

The D.C. Circuit Court, in its opinion in Tonia and Bill’s case, came to a similar conclusion about the value of consumer reviews for ensuring tour quality:

One need only peruse such websites [as Yelp and TripAdvisor] to sample the expressed outrage and contempt that would likely befall a less than scrupulous tour guide. Put simply, bad reviews are bad for business. Plainly, then, a tour operator’s self-interest diminishes—in a much more direct way than does the exam requirement—the harms the District merely hypothesizes. 45

And there is good reason to believe that what works to keep tour guide quality high in D.C. is already working in other locations and for other occupations as well. The hundreds of U.S. cities that do not license tour guides are not apparently beset with poor-quality guides. Moreover, while people know that restaurants, salons and autoshops must carry various licenses and permits and obey other laws, they also know that this means only that a service provider jumped through certain government-mandated hoops. Licenses and permits say nothing about the quality of meals, haircuts or car repairs consumers can expect. So when people are deciding which businesses to patronize, they ask their friends or colleagues for recommendations, or they visit websites like Yelp, Angie’s List or Thumbtack to read reviews. This dynamic is also visible in the workings of popular peer-to-peer services such as Uber, Lyft, Airbnb and eBay that rely heavily on ratings in order to function. More now than ever before, consumers are aware of their power to demand quality—and enjoying the improvements that result.

Empowering consumers can be a more effective way than occupational licensing to ensure quality in products and services—especially given the growing body of research, including this study, that casts doubt on the ability of tests and other licensing schemes to promote quality. Evidence suggests that many licenses can be eliminated without compromising quality, particularly for those occupations such as tour guide where minor risks, if any, are easily mitigated by consumers opting to take their business elsewhere. City and state governments interested in expanding opportunity and consumer choice need only look to the nation’s capital, where tour guide freedom rolls on under the Segs in the City banner, for a glimpse of what is possible when people are cut loose from unnecessary red tape.

Appendix A: Methods

Sample

The final dataset contained 82 different tour guide businesses, including subsets of larger businesses, and 14,762 unique user reviews—5,026 of which were submitted after July 2014, the month the exam requirement was removed. I also looked at each of the companies’ websites to determine the type of tours they provided (bike, boat, bus, mixed, Segway or walking) and the cost of their lowest priced tour.

Table A1 disaggregates the sample of reviews by regime and tour type. The number of businesses differs between the periods with and without the exam as businesses enter and leave the marketplace at different times. The analysis therefore includes some businesses that only existed in one period, making for an unbalanced panel. Average ratings are based on the average consumer rating for each company.

Tour companies were removed if their tours were never led by a guide or they were free, because these types of tours were exempt from licensing in D.C. I kept separate any business that turned out to be part of another business in the sample because a consumer going through the TripAdvisor website would see them as two separate sightseeing tours and might not know that they were the same company. Additionally, sub-businesses may use different modes of transportation or one may offer tours led by a guide, while the other may offer mixed tours, as in some with a guide and some without.

Table A1: Average Ratings by Tour Type

[table] Regime,Tour Type,Bike,Boat,Bus,Mixed,Segway,Walking,Total

Exam,Companies,7,5,13,19,5,16,65

,Reviews,257,191,”3,038″,”3,787″,”1,887″,576,”9,736″

,Avg. Rating,4.96,3.34,3.79,4.54,4.84,4.14,4.27

No Exam,Companies,8,5,16,20,5,24,78

,Reviews,143,78,”2,065″,”1,968″,461,311,”5,026″

,Avg. Rating,4.61,3.52,4.09,4.60,4.85,4.14,”4.30″

All,Companies,9,5,16,23,5,24,82

,Reviews,400,269,”5,103″,”5,755″,”2,348″,887,”14,762″

,Avg. Rating,4.66,3.32,4.05,4.63,4.84,4.15,4.31[/table]

Analysis

To test the District’s hypothesis that tour quality would decline without the existence of its test, t-tests were run on the average ratings before and after the regime change for the whole sample as well as for each tour type grouping. These results show that there is no statistical difference between the average consumer ratings of tour guide companies before and after the change.

To further isolate the influence of the exam regime on the average monthly consumer rating (Y) for each company, this analysis measured differences with ordinary least squares (OLS) regression using an interrupted time-series analysis with clustered standard errors. In equation (1), ratings were regressed on the study period measured in months (β1), a dichotomous variable for the presence of the testing regime (β2), a growth score measuring the number of months since the test’s removal (β3), the company’s lowest tour price (β4) and tour type dummy variables (X). Additionally, equation (2) was run with interaction terms between tour type and period, regime and growth score to isolate whether individual tour types were affected by the change.

The dependent variable (Y) is the average rating of each business during each period (months). Each rating was given an importance weight based on the reviewer’s reputation signaling—the reviewer’s number of helpful votes. The weights are based on the quantile the review was put in based on the reviewer’s number of helpful votes. Helpful votes are given to a reviewer when another user finds one of their reviews to be “helpful.” These votes are used as a measure of reputation and may reduce uncertainty for readers of reviews. 46

The following are the OLS interrupted time-series models used:

(1) Y = β0+ β1 (Study period) + β2 (Regime) + β3 (Growth score) + β4 (Lowest price) + X

(2) Y = β0+ β1 (Study period) + β2 (Regime) + β3 (Growth score) + β4 (Lowest price) + X

+β5 (Boat*Period) + β6 (Boat*Regime) + β7 (Boat*Growth score)

+β8 (Bus*Period) + β9 (Bus*Regime) + β10 (Bus*Growth score)

+β11 (Mixed*Period) + β12 (Mixed*Regime) + β13 (Mixed*Growth score)

+β14 (Segway*Period) + β15 (Segway*Regime) + β16 (Segway*Growth score)

+β17 (Walking*Period) + β18 (Walking*Regime) + β19 (Walking*Growth score)

The study period is a count variable that starts at one for August 2010 and counts upward to 60 for July 2015. The growth score is a count variable that remains at zero until the regime change at 49 months, when it starts to count upward to 12 for July 2015.

About half of the reviews were for companies that offered both led and unled tours. There is no way to distinguish reviews of tours that had guides from those of tours without guides. The outcome is the same if these companies are removed. 47 A company fixed effects model similar to equation (1) was also run and the results remained the same. The regression output for these analyses can be supplied upon request.

Appendix B: Regression Results

| Coefficient | Robust SE | p | Coefficient | Robust SE | p | |

|---|---|---|---|---|---|---|

| Period | 0.009 | 0.005 | 0.06 | -0.003 | 0.001 | 0.04 |

| Regime | -0.042 | 0.081 | 0.61 | 0.013 | 0.140 | 0.93 |

| Growth score | -0.019 | 0.011 | 0.08 | -0.005 | 0.016 | 0.76 |

| Lowest price | 0.004 | 0.002 | 0.01 | 0.003 | 0.001 | 0.02 |

| Boat | -1.356 | 0.256 | 0.00 | -1.514 | 0.387 | 0.00 |

| Bus | -0.751 | 0.254 | 0.00 | -1.931 | 0.527 | 0.00 |

| Mixed | -0.238 | 0.172 | 0.17 | -0.542 | 0.321 | 0.09 |

| Segway | 0.044 | 0.149 | 0.77 | -0.008 | 0.146 | 0.96 |

| Walking | -0.489 | 0.237 | 0.04 | -0.922 | 0.406 | 0.02 |

| Boat*Period | 0.005 | 0.007 | 0.47 | |||

| Boat*Regime | -0.137 | 0.270 | 0.61 | |||

| Boat*Growth score | -0.046 | 0.038 | 0.23 | |||

| Bus*Period | 0.030 | 0.012 | 0.01 | |||

| Bus*Regime | 0.008 | 0.263 | 0.98 | |||

| Bus*Growth score | -0.044 | 0.033 | 0.18 | |||

| Mixed*Period | 0.006 | 0.008 | 0.46 | |||

| Mixed*Regime | 0.123 | 0.196 | 0.53 | |||

| Mixed*Growth score | 0.000 | 0.019 | 0.99 | |||

| Segway*Period | 0.000 | 0.003 | 0.98 | |||

| Segway*Regime | -0.042 | 0.163 | 0.80 | |||

| Segway*Growth score | -0.006 | 0.017 | 0.73 | |||

| Walking*Period | 0.014 | 0.008 | 0.08 | |||

| Walking*Regime | -0.381 | 0.220 | 0.08 | |||

| Walking*Growth score | -0.001 | 0.029 | 0.96 | |||

| Intercept | 4.253 | 0.229 | 0.00 | 4.705 | 0.134 | 0.00 |

| Sigma_u | 0.738 | 0.780 | ||||

| Sigma_e | 0.778 | 0.767 | ||||

| Rho | 0.473 | 0.508 | ||||